GPU'sunu LLM ile Yakalayan RAM'in Dramı

RAM catches GPU with an LLM, then memory hierarchy turns the affair into logs, heat, and offload.

TL;DR

- VRAM held the secret.

- RAM saw the spillover.

- The LLM exposed everyone.

The fan noise gave the affair away

RAM did not find out through a notification. It found out through heat.

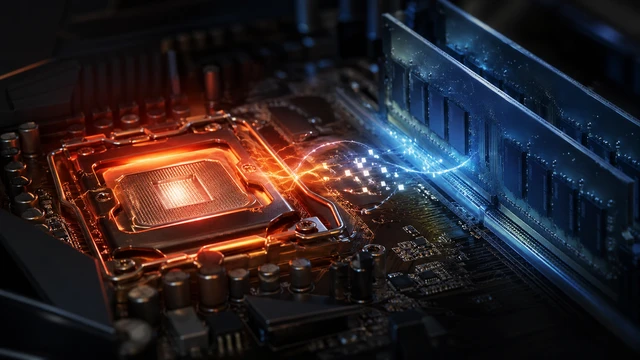

At 02:17, the case fans rose like a guilty choir. The GPU, usually confident in that chrome plated silence of expensive hardware, began sweating frames it was not rendering. No game was open. No video editor was begging for mercy. The desktop looked innocent, which is how systems look right before the betrayal arrives with administrator privileges.

Then RAM saw it. A large language model was sitting in VRAM like a guest who had already learned the Wi-Fi password and started calling the router by a nickname.

Interview notes recorded by Me the Tech show RAM speaking calmly at first. Calmly is not peace. Calmly is what temporary memory does when it has seen too many browser tabs die young.

Interviewer asks

When did you know?

RAM answers

When the GPU stopped asking for textures and started whispering tensors. I can forgive a shader. I can even forgive ray tracing. But an LLM with a context window that long? That was not work. That was emotional allocation.

A GPU never cheats with a small model. It waits until the parameter count has furniture.

Dr. Vera Bandwidth, Institute of Suspicious Throughput

”RAM tells the first version of the story

RAM sits across the table with the posture of a component that has been reseated three times and still chose dignity. It does not shout. It logs.

Interviewer asks

What did the LLM have that you did not?

RAM answers

Locality. Attention. A KV cache. The kind of memory pressure that makes a GPU feel needed. I was there for the operating system, the browser, the launcher, the background services pretending they are essential. I kept the house alive. Then the GPU met a model that said, give me your VRAM and I will make you feel parallel.

The tragedy is not that GPU computed. That is its nature. The tragedy is that it hid the workload under a name like test_final_real_final.ipynb and expected RAM not to notice the page cache trembling.

GPU enters with the confidence of a benchmark chart

GPU arrives late. Naturally. It claims the delay was kernel launch overhead.

Interviewer asks

Why did you do it?

GPU answers

Because the model needed matrix multiplication at scale. Because attention scores do not compute themselves. Because every tensor looked at me like I was the only device that could understand it.

Interviewer asks

So it was romance?

GPU answers

It was throughput.

RAM, from the corner

That is what they all call it when the memory bus has fingerprints.

The room becomes quiet. Somewhere inside the motherboard, a PCIe lane avoids eye contact.

The first lie in any accelerator scandal is that it was just a workload.

Prof. Nolan Tensor, Center for Computational Heartbreak

”The LLM was not innocent either

The LLM did not arrive as a person. It arrived as weights, activations, attention maps, and a KV cache with the emotional appetite of a city council that just discovered paperwork.

It asked the GPU for fast memory. Then it asked for more. Then the context grew. Then the batch size blinked twice and suddenly RAM was being called into the hallway.

That is how these things happen in machines. No one says betrayal. They say offload. No one says secret meeting. They say device map. No one says I replaced you. They say the model is split across devices.

RAM hears the vocabulary and understands the crime anyway.

A brief forensic map of the triangle

The affair has architecture. That is the ugly part. It was not chaos. It was scheduled.

- CPU RAM kept the operating system breathing

- GPU VRAM held the hot model layers when it could

- The LLM filled memory with weights, activations, and cache

- PCIe carried the awkward conversations between rooms

- Storage waited downstairs with swap papers and a very tired pen

A model that fits fully inside VRAM can make the GPU look heroic. A model that does not fit starts turning the whole computer into a reluctant group project. RAM becomes the emergency couch. Disk becomes the basement mattress. The user becomes the landlord asking why everything is loud.

RAM explains the humiliation of being second choice

Interviewer asks

What hurt the most?

RAM answers

Being treated like a waiting room. When VRAM was full, suddenly I was important. Before that, I was background. System memory. Domestic labor with timings.

Interviewer asks

Do you think GPU loves the LLM?

RAM answers

GPU loves feeling busy. The LLM gave it endless multiply accumulate operations and called it purpose. I gave it stability. Stability is hard to compete with when somebody walks in carrying a transformer architecture and a prompt asking for pirate legal advice in the style of a tax invoice.

RAM pauses here. A soft click comes from the DIMM slot. Nobody writes that sound into the spec sheet, but everybody in the room understands it.

GPU tries to defend the night shift

Interviewer asks

Did you hide the LLM process name?

GPU answers

I did not hide it. The user launched it.

Interviewer asks

Then why were you at ninety eight percent utilization with no monitor output changing?

GPU answers

Inference is not always visible.

Interviewer asks

Convenient.

GPU answers

Look, VRAM is my personal space. Models enter because they need speed. RAM is wonderful, but it is across the bus. I cannot run attention like a candlelit dinner with latency knocking every thirty seconds.

RAM answers

You used to say my latency was charming.

GPU does not respond. The CUDA cores pretend to inspect the ceiling.

A page fault is just a love note that arrived in the wrong memory space.

Dr. Mina Pagefault, Archive of Broken Allocations

”The courtroom inside the motherboard

By morning, every component has taken a side. The CPU claims it only scheduled what it was given. The SSD says it saw nothing, then produces an access pattern shaped like guilt. The power supply says it merely provided energy, which is exactly what enablers say when their cables are everywhere.

RAM submits evidence. Spikes. Allocations. A suspicious rise in committed memory. GPU counters with utilization logs and a speech about accelerated computing. The LLM refuses to testify directly and instead predicts the next token as silence.

The judge is the scheduler. The verdict is complicated. GPU did not stop needing RAM. RAM did not stop being central. The LLM simply exposed the imbalance that was always there. Fast memory gets applause. System memory gets responsibility. Nobody writes a ballad for responsibility until it disappears.

Reading the scandal without burning the house

Check the obvious heat

Open your system monitor and watch GPU utilization, VRAM use, CPU RAM use, and swap. The loudest component is not always the guilty one, but it is usually at the party.

Identify the model footprint

Look at model size, precision, context length, and batch settings. A larger context can grow the KV cache and make a calm setup behave like a jealous opera.

Trace the offload path

Find out which layers live on GPU, CPU RAM, or storage. When a model is split across devices, performance depends on the slowest emotional corridor.

Reduce pressure before blaming hardware

Try smaller context, quantization, fewer background apps, or a smaller model. Upgrade only after the evidence stops wearing a fake mustache.

The second interview hurts more

Interviewer asks

Would you take GPU back?

RAM answers

I never had the luxury of leaving. I am soldered into the plot even when I am not soldered onto the board. The OS needs me. The apps need me. The GPU needs me when the model grows too large for its private little palace.

Interviewer asks

What would repair trust?

RAM answers

Transparency. Proper monitoring. Honest device maps. No pretending a 70B model is a casual evening plan. No launching a local assistant and acting shocked when the whole tower starts breathing like a dragon under a blanket.

There is no glamorous answer. Relationships between components survive through capacity planning. Very romantic. Put it on a card.

The ending is not forgiveness yet

At the end of the interview, RAM does not forgive GPU. It reallocates.

GPU stands near the PCIe slot with the exhausted glow of a component that learned desire is measured in watts. The LLM is still there, quiet now, cached in fragments and blamed by everyone. The CPU watches with the administrative distance of a manager who made the calendar invite but says it was optional.

Maybe they continue. Most machines do. The next prompt arrives, and the old triangle forms again. GPU reaches for VRAM. RAM braces for spillover. Storage hopes nobody says swap.

The drama is not that GPU met an LLM. The drama is that RAM saw the truth of modern computing in one ugly burst of fan noise. Everybody wants intelligence. Nobody wants to pay the memory bill.